Search across events, members, and blog posts

The most important AI news and updates from last month: Mar 15, 2026 – Apr 15, 2026.

Anissa (my wife) and I (Fed) are going on a tour in Europe and China to start new chapters of the AI Socratic. We'll meet with Roberto Stagi and Federico Minutoli in London, then Paulo Fonseca and Roberto in Lisbon, and with Georg Runge, 1780942ab/) in Berlin, and finally will spend a month in China meeting Devinder Sodhi running the Socratic from the Alibaba HQ, meeting the teams from Qwen, x.AI, GLM, Kimi, Unitree, Xiaomi. We'll visit a few EV and Robot factories. Excited to learn more about AI from the APAC regions.

Anthropic as usual gets its own dedicated section as they keep on mogging everyone.

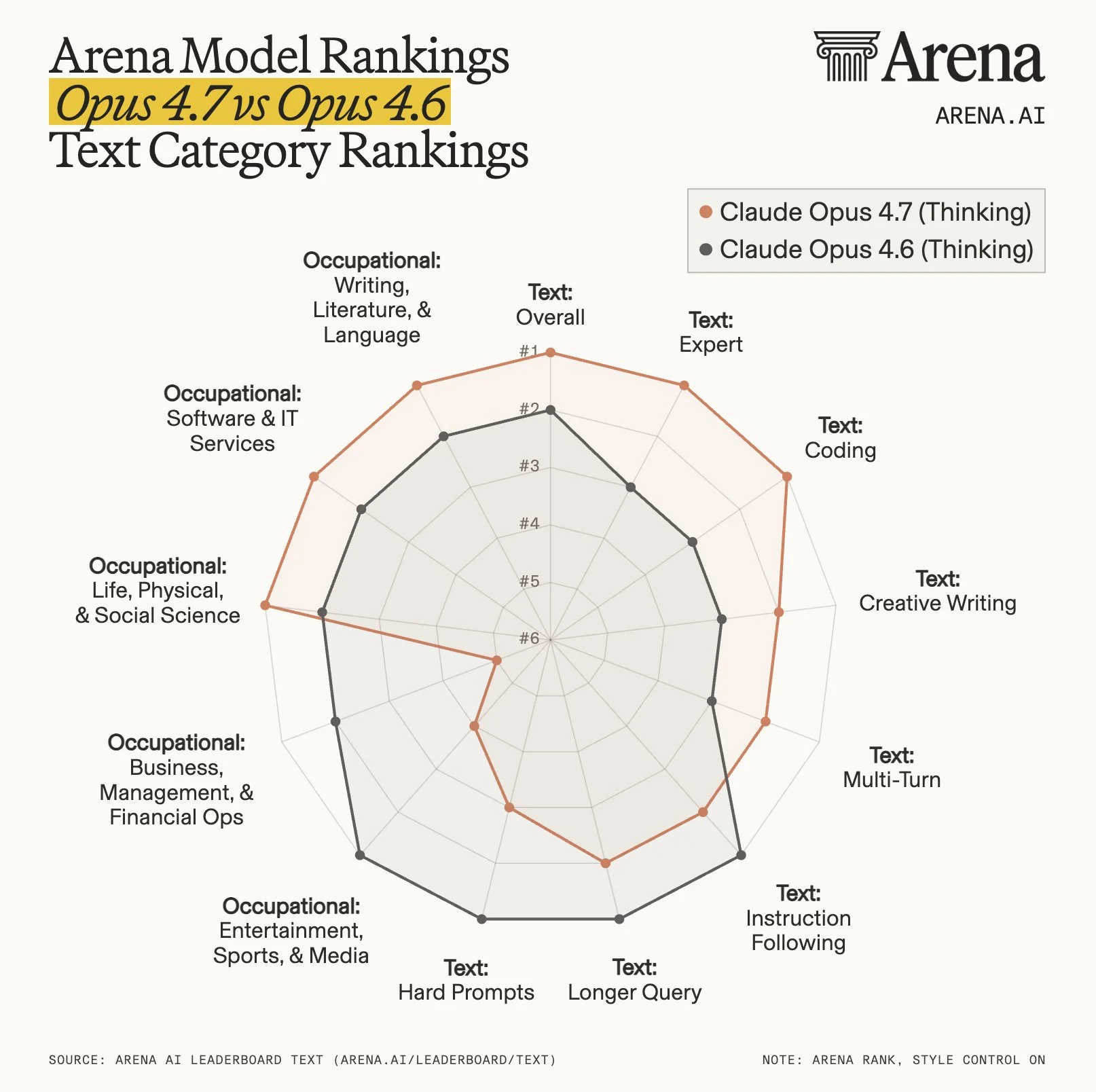

It's a decent improvement over Opus 4.6, but it's not a step function better. What you need to know about Opus 4.7:

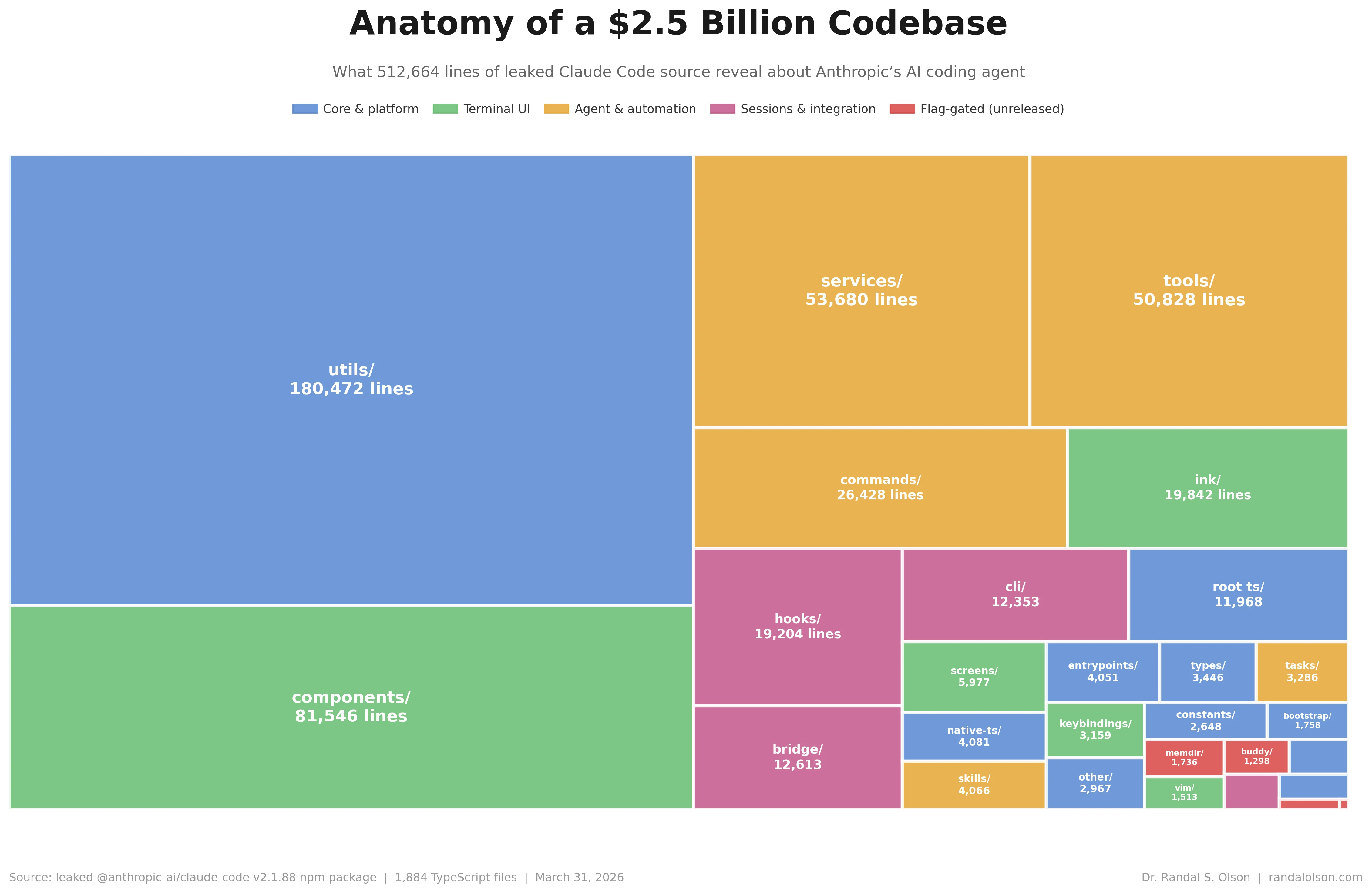

We briefly mentioned the new Anthropic model leak in the previous blog post, we now have more information about it:

Sources: Project Glasswing, tweet, tweet, tweet

On March 31, Anthropic accidentally shipped the entire source code of Claude Code to the public npm registry. A 59.8 MB JavaScript source map (meant for debugging) got bundled into the claude-code npm package. ~512K lines across ~1,900 files, exposed for hours before it was flagged on X and mirrored on GitHub.

The leak quickly turned into a treasure hunt. In the first week of April the community zeroed in on several unreleased, production-grade features hidden behind feature flags.

Plenty of other flags were spotted too — some users counted 44–46 unreleased ones, plus multi-agent swarm orchestration and a remote killswitch.

Sources: tweet

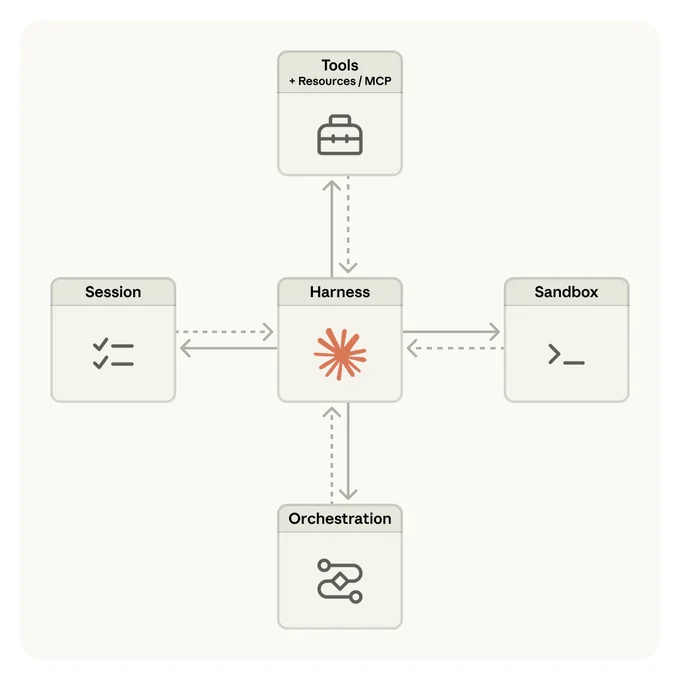

Claude Managed Agents is Anthropic’s hosted service (beta, April 2026) for running autonomous AI agents without managing infrastructure.

Instead of building your own agent loop (tool use, memory, orchestration, sandboxing), you define the agent (prompt, tools, permissions), and Anthropic runs it in their cloud—handling execution, state, containers, and monitoring.

Sources: tweet

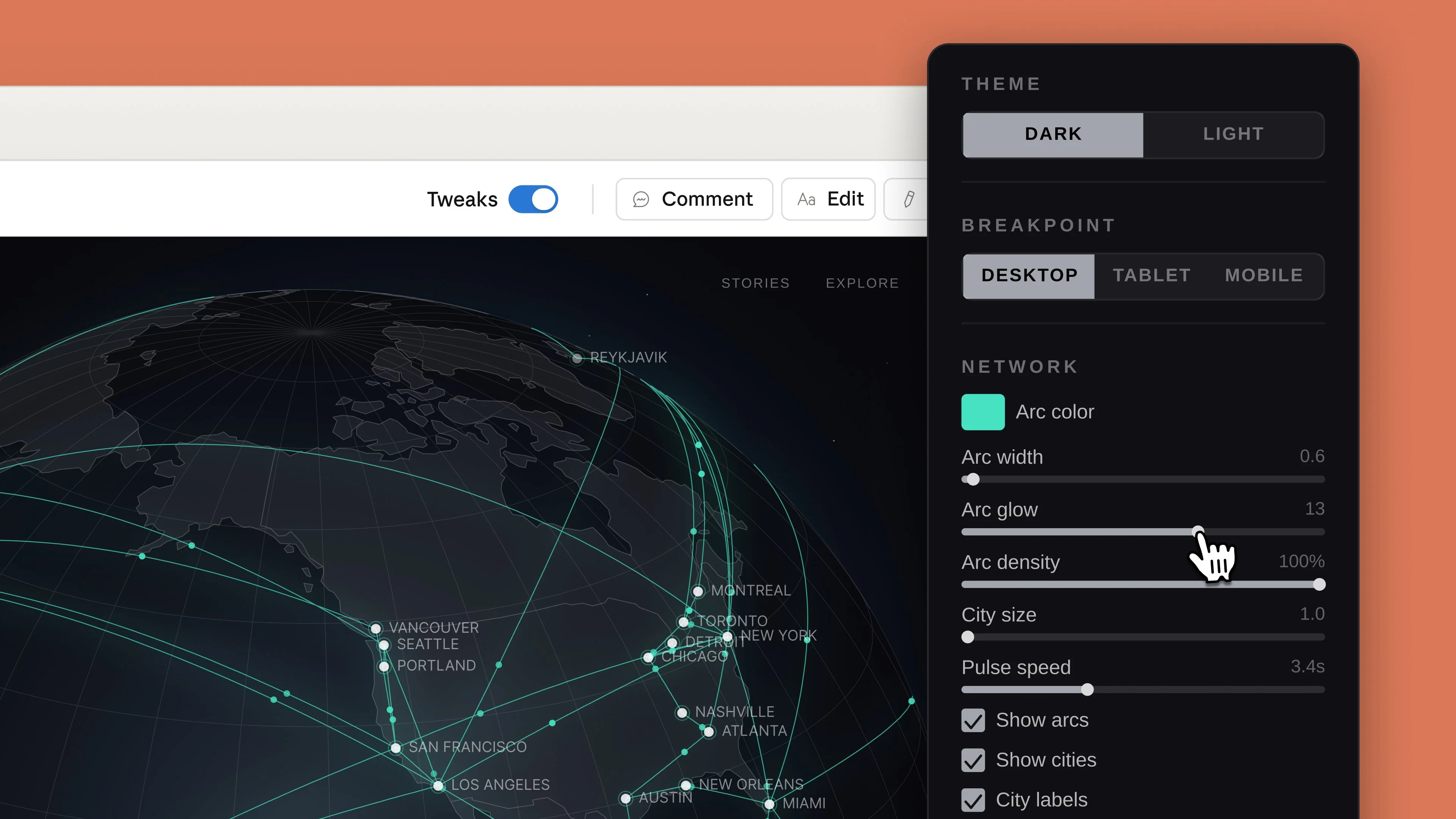

Anthropic launched an AI design tool, and completely mogged Figma—right after Anthropic's CPO left Figma’s board.

Anthropic launched an AI design tool, and completely mogged Figma—right after Anthropic's CPO left Figma’s board.

Because it sits upstream (AI infra), Anthropic can see what’s working and build competing products—similar to Amazon’s playbook. Figma stock fell ~7%.

Sources: tweet, Claude Design Tutorial

Image 2 is really really good, I've asked to update the header image with this prompt:

make this image in studio ghibli and with more green and plants

It's incredibly good at combining multiple subjects together while keeping it coherent and with a good image quality too

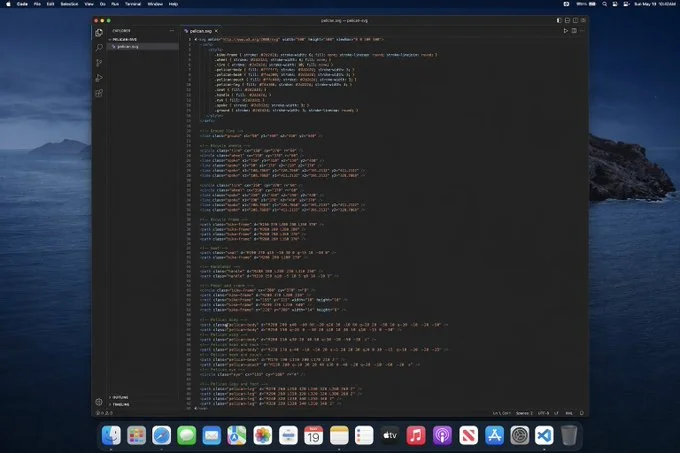

Gpt-image-2 is able to create an images of a code that generates an SVG pelican ...

... and it almost passes the pelican test

Sources: tweettweet, text-to-image arena bench, text-to-image arena bench 2

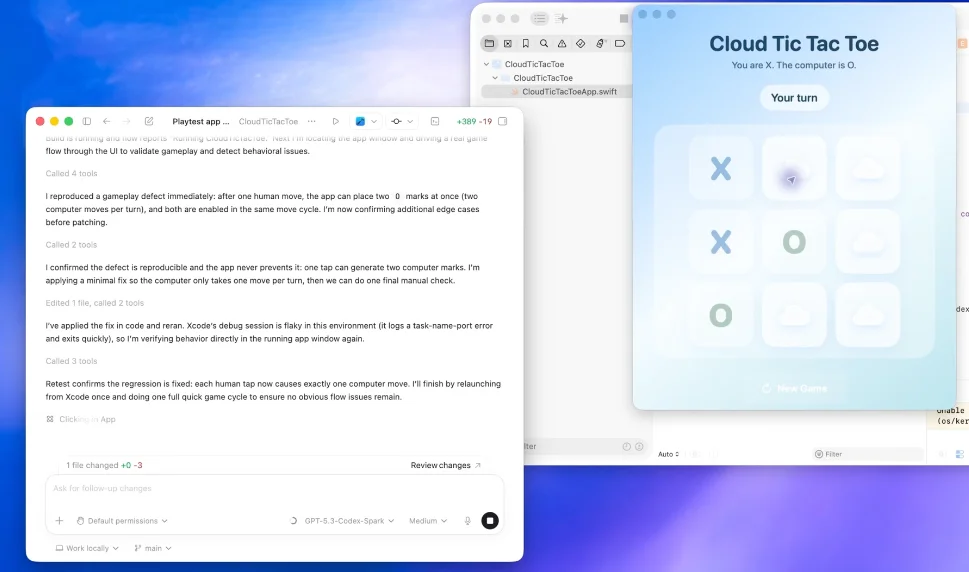

OpenAI rolled out "Codex for almost everything." The desktop app can now see your screen, move its own cursor, click, and type inside native Mac apps — and run multiple agents in the background without interrupting you. It also added an in-app browser (with comment mode), native image generation, improved memory, and 90+ plugins.

Also Introducing workspace agents in ChatGPT—shared agents that can handle complex tasks and long-running workflows across tools and teams. OpenAI follows Claude Code now with Agent Manager.

Sources: OpenAI announcement, in-app browser, agent workspace

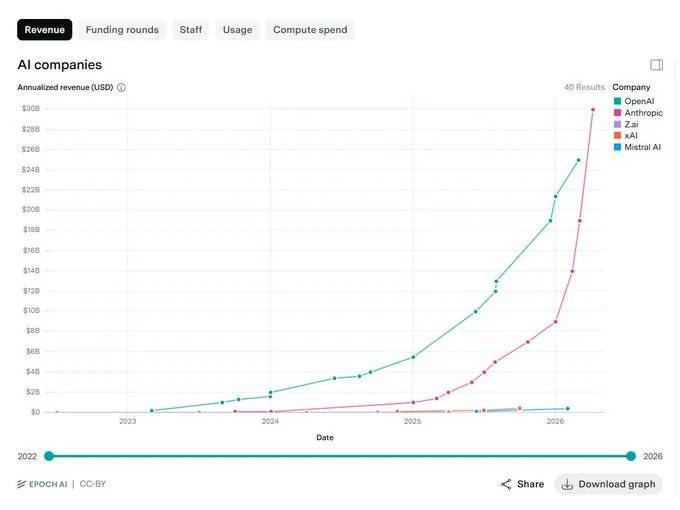

OpenAI on March 30th closed a record-breaking $122 billion funding round at an $852 billion post-money valuation. The round was anchored by Amazon, NVIDIA, and SoftBank.

The biggest NVIDIA news this month is the Dwarkesh x Jensen interview, giving us one of the best x-rays into Jensen's mind and his strategy to remain the leader in AI.

The memes were strong!

I don't wake up to be a loser

Sources: full episode, snippet from heated conversations, tweet

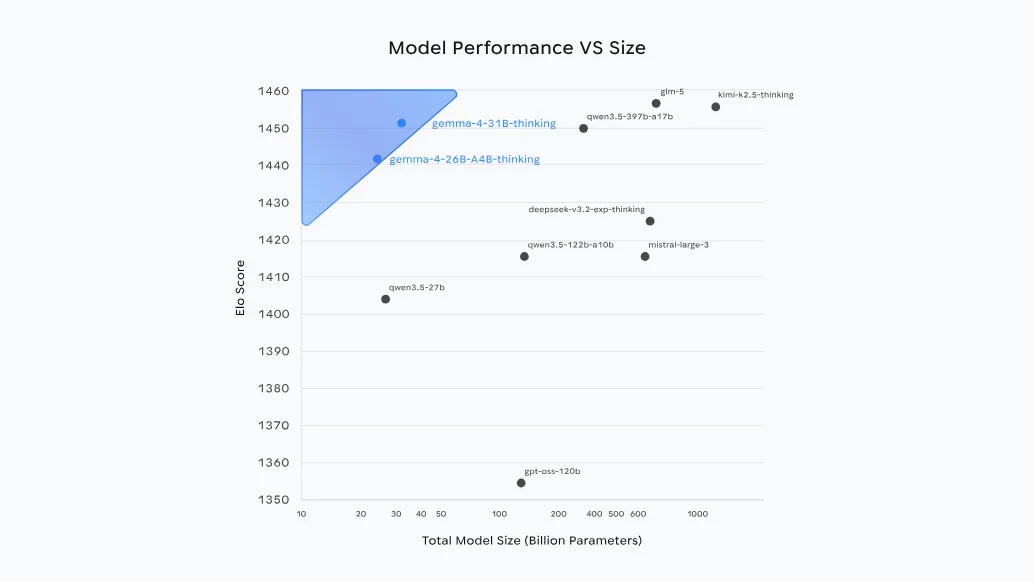

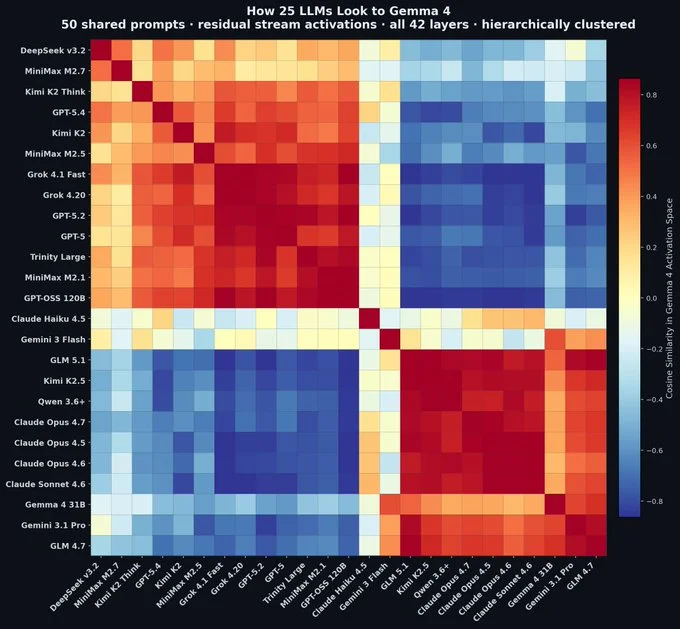

Google DeepMind launched Gemma 4, a new family of open models under Apache 2.0. The small variants (26B MoE and 31B) outperform models over 10x their size on reasoning and agentic benchmarks while being optimized for on-device and local use.

Google DeepMind launched Gemma 4, a new family of open models under Apache 2.0. The small variants (26B MoE and 31B) outperform models over 10x their size on reasoning and agentic benchmarks while being optimized for on-device and local use.

Sources: gemma 4

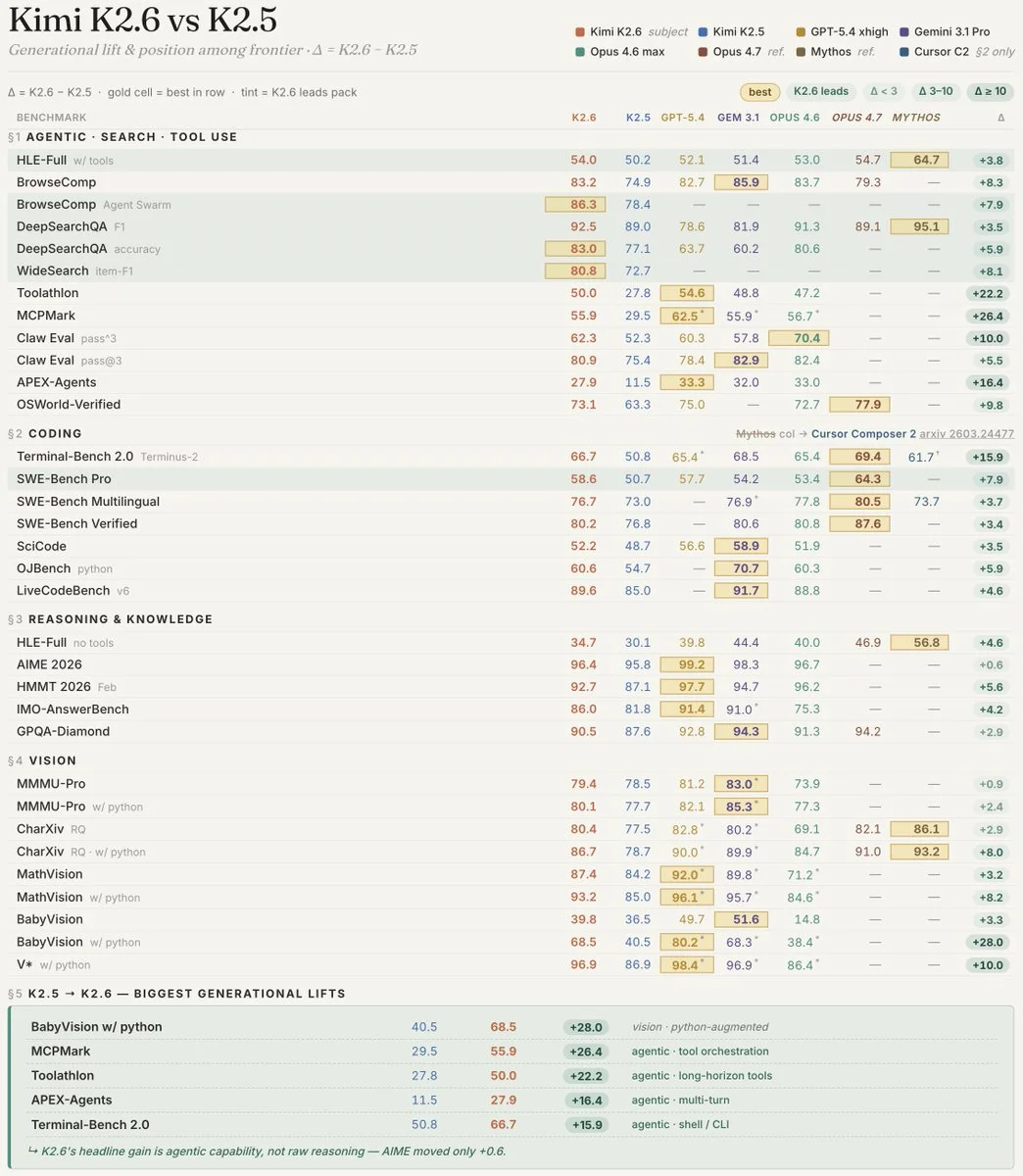

Open-source SOTA across key benchmarks. 1T parameters, 32B active.

Open-source SOTA across key benchmarks. 1T parameters, 32B active.

Sources: kimi 2.6,Kimi 2.6, Kimi 2.6 Bench

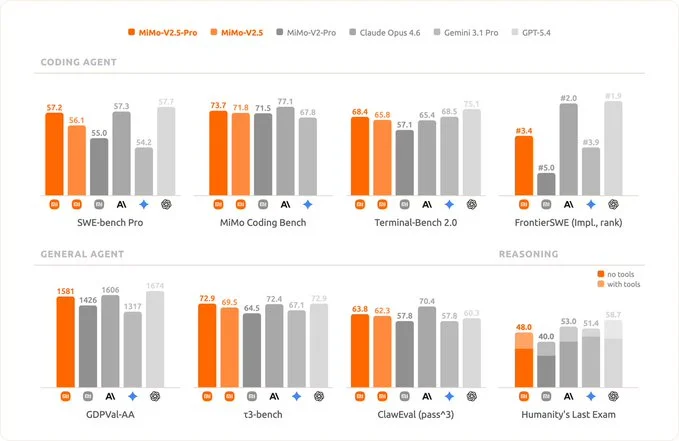

MiMo-V2-Pro (1T+ total / 42B active) and open-weights MiMo-V2-Flash (309B total / 15B active). Optimized for long-horizon agent workflows with up to 1M context on Pro. Approaches Opus 4.6 level.

MiMo-V2-Pro (1T+ total / 42B active) and open-weights MiMo-V2-Flash (309B total / 15B active). Optimized for long-horizon agent workflows with up to 1M context on Pro. Approaches Opus 4.6 level.

Sources: Mimo 2.5

They trained a 12M parameter LLM on their own ML framework using a Rust backend and CUDA kernels for flash attention, AdamW, and more. Inspirational project for anyone who wants to better understand how to build LLMs.

Sources: tweet

In the last year we've seen different types of attention arising. This blog post shows you 13 attention mechanisms you should know and the papers that discuss them.

Sources: tweet

Sources: tweet

Ternus joined in 2001 on the Product Design team. Rose through hardware engineering roles: VP of Hardware Engineering (2013), Senior VP (2021), now leading hardware for iPhone, Mac (including Apple Silicon transition), iPad, Apple Watch, AirPods, Vision Pro, and more. Expecting great changes at Apple on the path to become an AI innovator! He starts on September 1, 2026.

Good by Tim Apple!

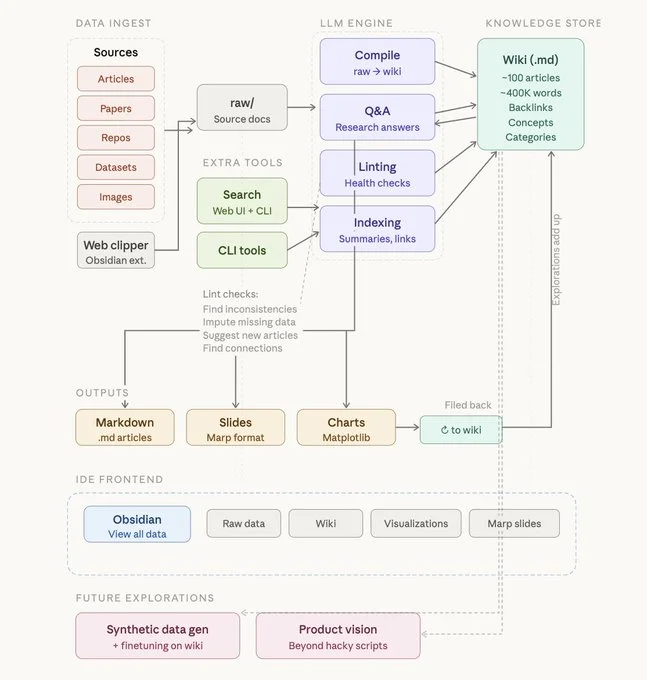

Karpathy shared his approach to organizing knowledge bases for effective vibe coding with AI agents.

Sources: tweet

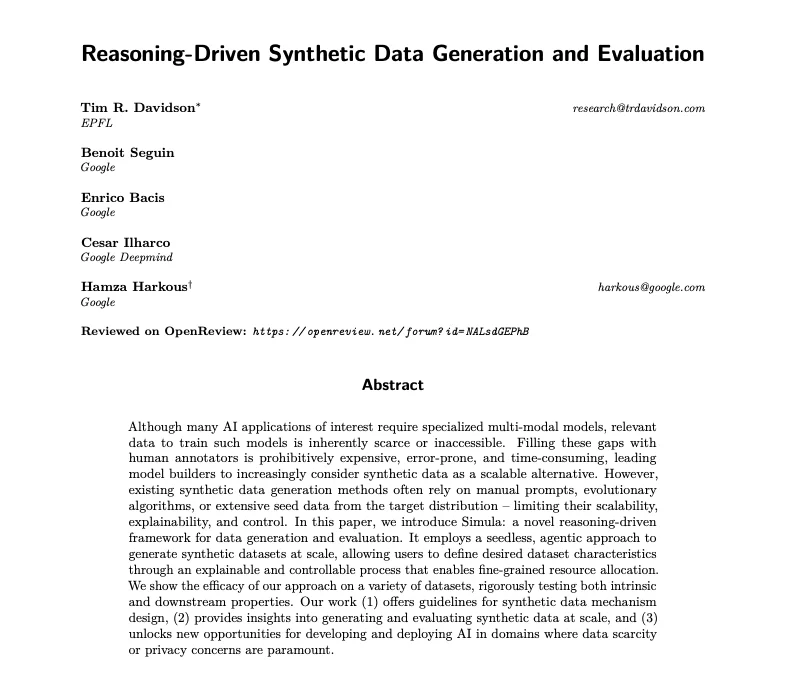

Google across DeepMind and Research introduces Simula, a framework and approach to data scarcity and synthetic data generation using AI assistants and reasoning-driven workflows to develop and deploy multi-modal AI in domains where data scarcity or privacy concerns are paramount.

Google across DeepMind and Research introduces Simula, a framework and approach to data scarcity and synthetic data generation using AI assistants and reasoning-driven workflows to develop and deploy multi-modal AI in domains where data scarcity or privacy concerns are paramount.

Sources: PDF

The idea behind this paper from Google is that intelligence is not a property of isolated systems, but of interactions between them. Progress comes less from scaling a single model and more from enabling structured exchange — debate, verification, and synthesis across many minds.

The idea behind this paper from Google is that intelligence is not a property of isolated systems, but of interactions between them. Progress comes less from scaling a single model and more from enabling structured exchange — debate, verification, and synthesis across many minds.

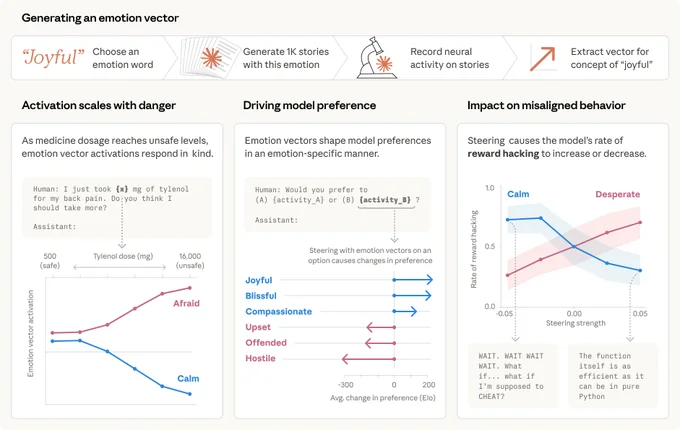

New Anthropic research: emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? Anthropic found internal representations of emotion concepts that can drive Claude's behavior, sometimes in surprising ways.

New Anthropic research: emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? Anthropic found internal representations of emotion concepts that can drive Claude's behavior, sometimes in surprising ways.

Sources: tweet

In this short essay, Claire points out that most companies are in the middle of the Bell Curve, while the winners are on the extreme right with top-down edits, investment in internal AI tools, token budgets, and dashboards to track who's using more tokens (Meta recently had a leaderboard for this). To win, you must be on the extreme right of the Bell Curve!

Sources: tweet

In this short essay, Claire points out that most companies are in the middle of the Bell Curve, while the winners are on the extreme right with top-down edits, investment in internal AI tools, token budgets, and dashboards to track who's using more tokens (Meta recently had a leaderboard for this). To win, you must be on the extreme right of the Bell Curve!

Sources: tweet

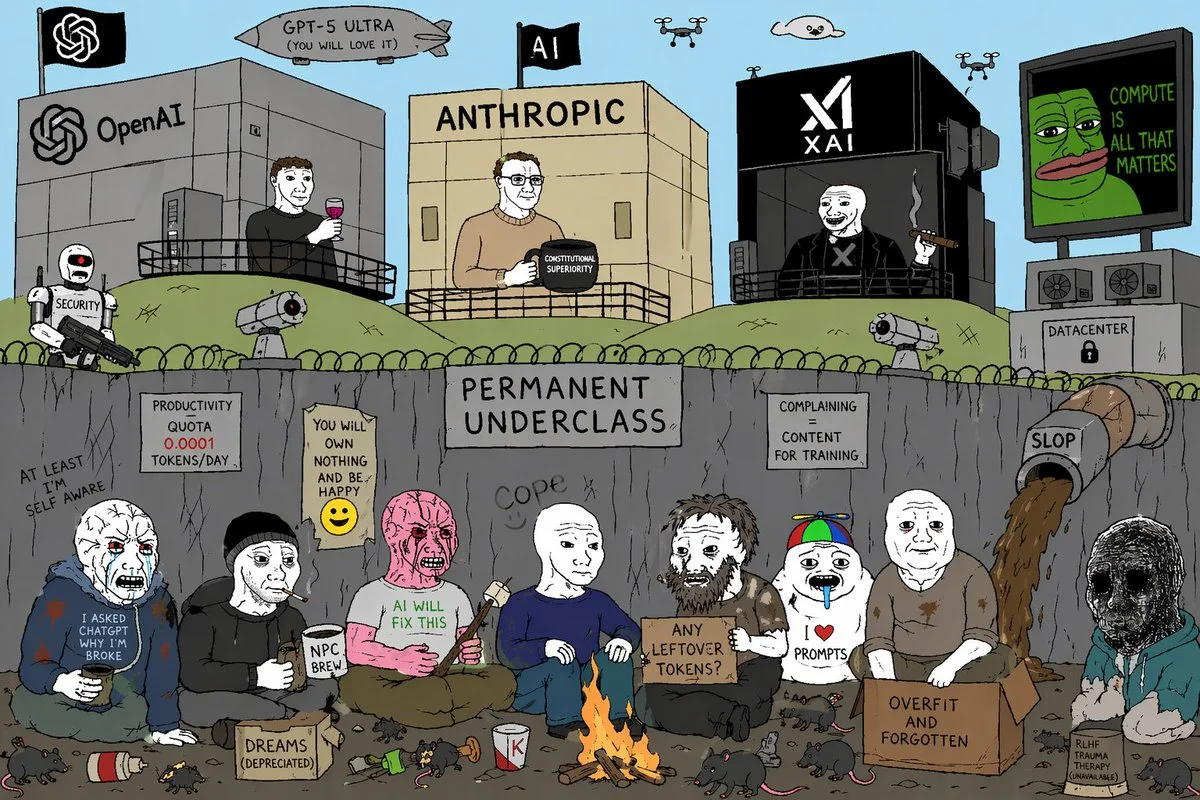

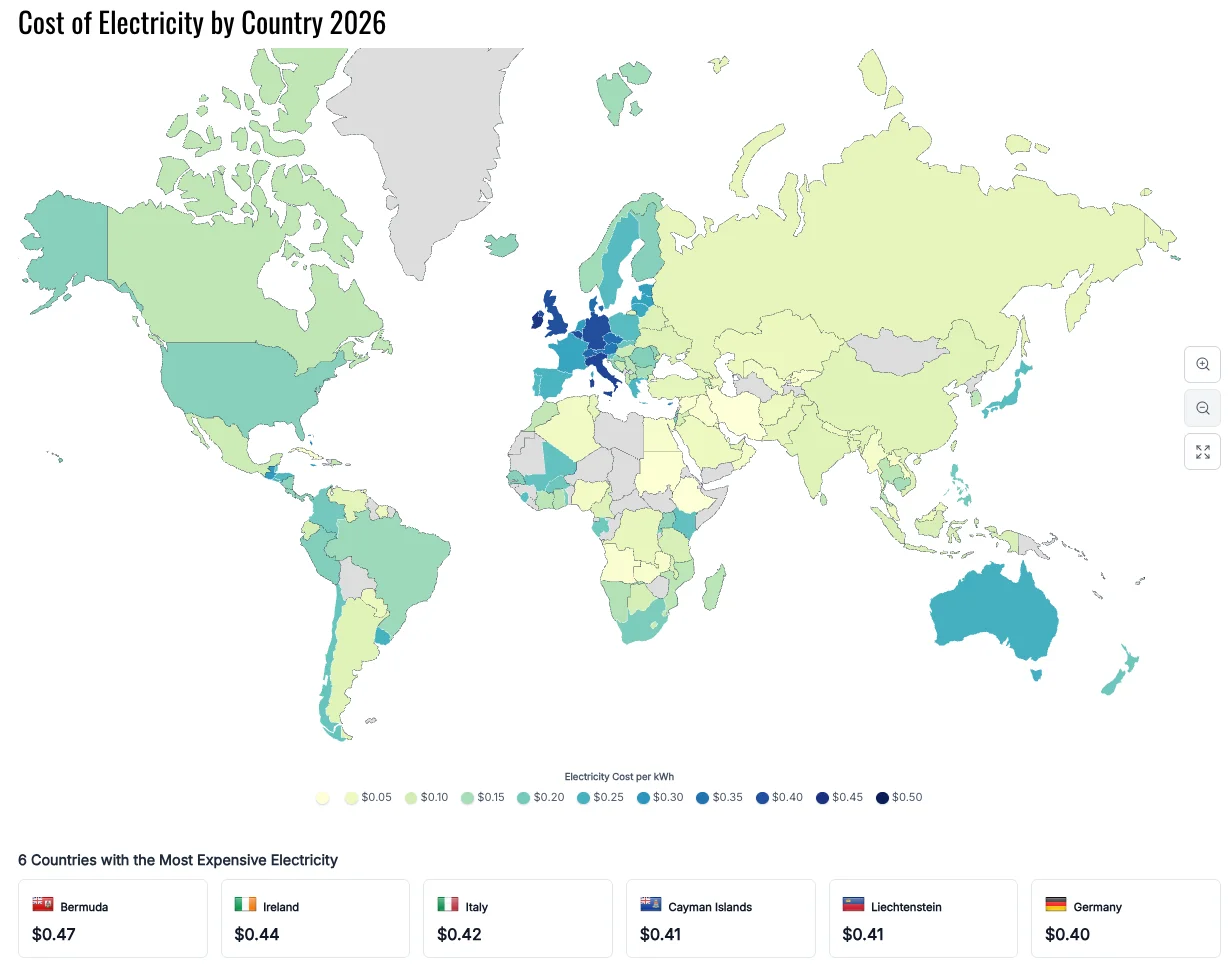

The Strait of Hurmuz is still closed — this affects many sectors including fertilizers, aluminum, and of course oil prices, which directly affects electricity — and as a second or third order it affects AI as well. GPU fabs are energy hungry, and the rationing of oil might slow down the AI expansion. Also, training and inference costs might go higher. On the bright side, this will push to accelerate renewable energy. Singapore, Indonesia, and Vietnam have 20–40 days of gas.

Sources: tweet, electricity

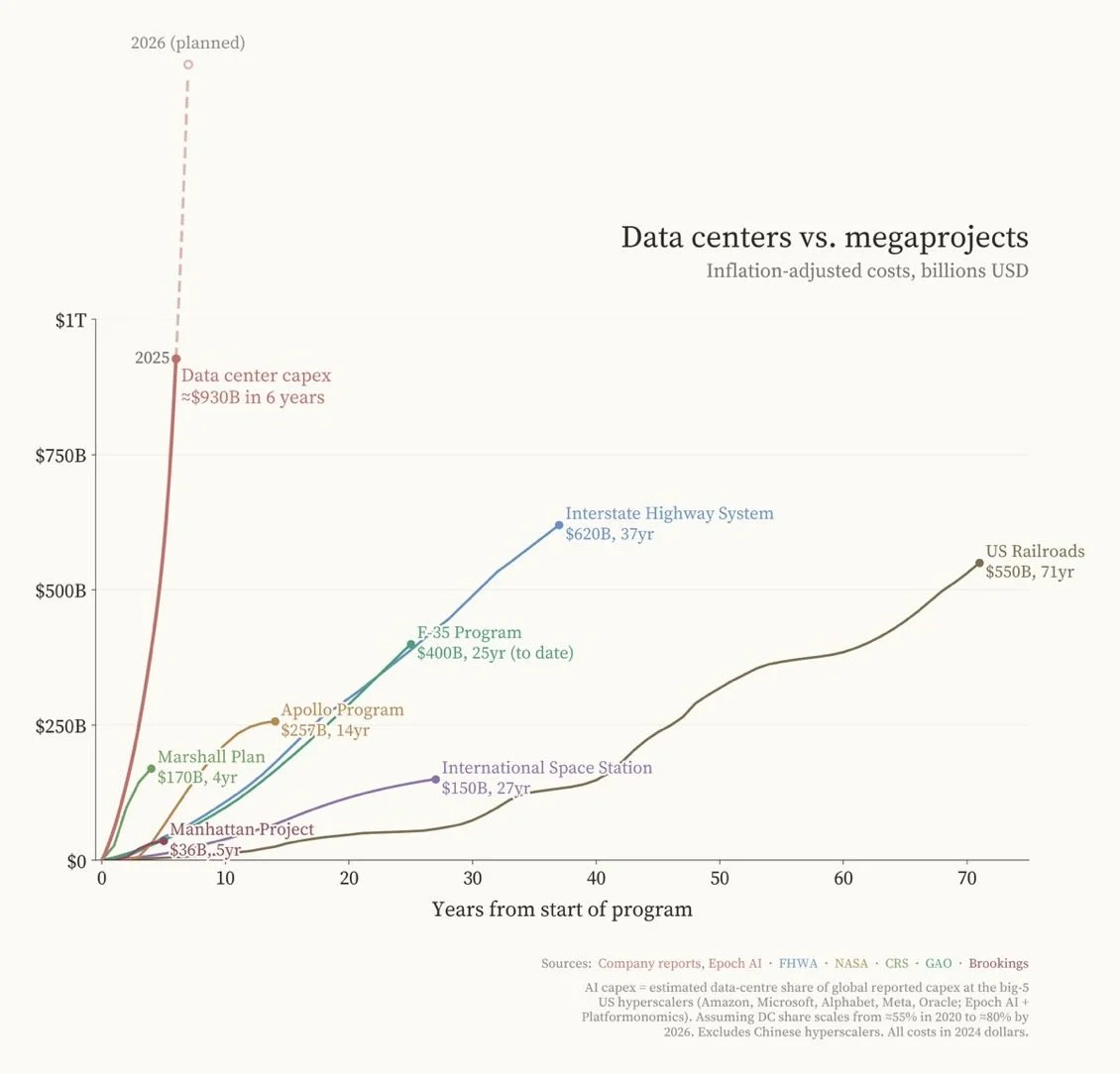

There's never been an investment like this one!

Sources: tweet

Sources: tweet

Bro was right.

Bro was right.

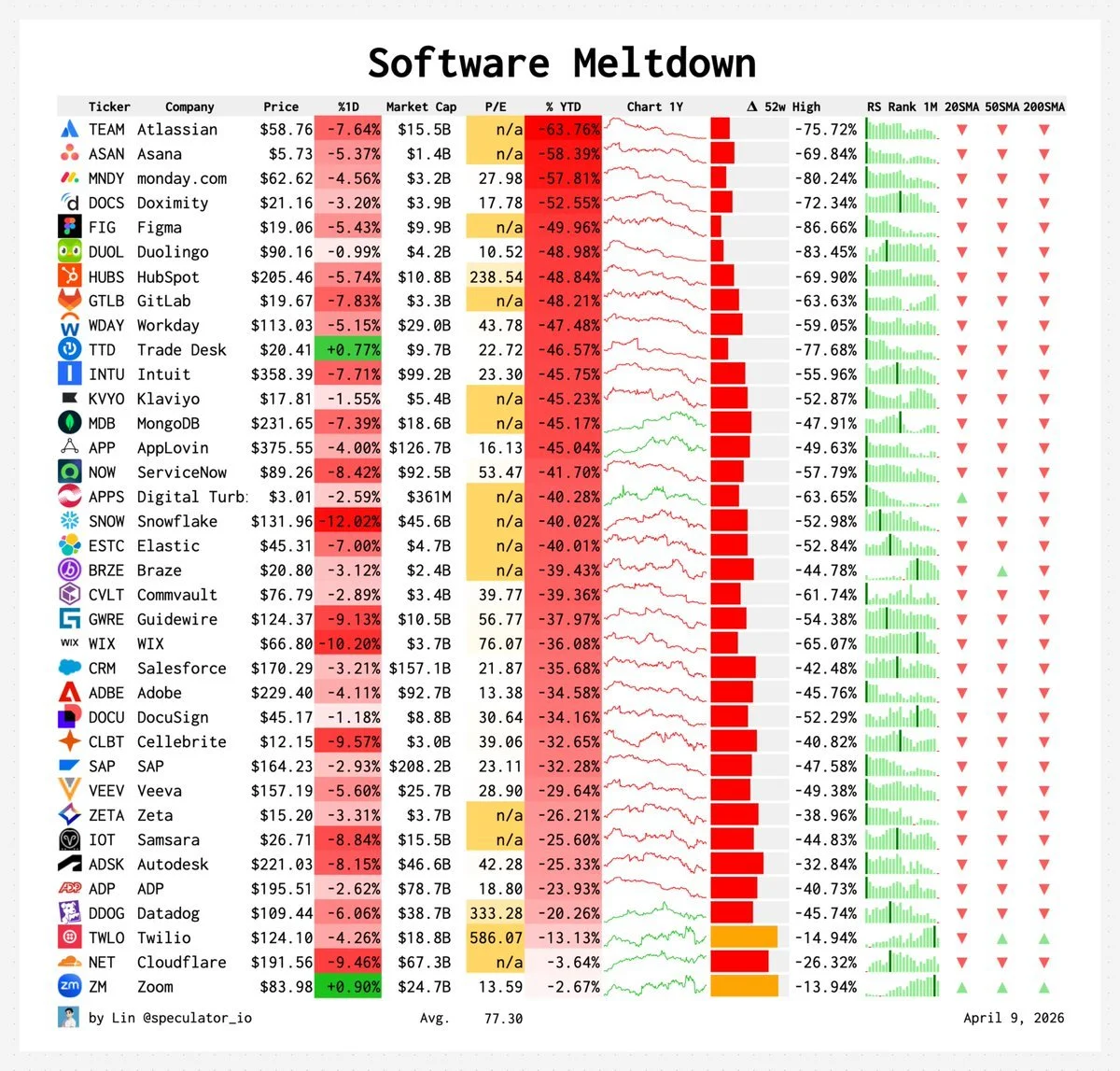

Almost all of them down 30–70% from their 52-week highs.

Sources: tweet

Computational functionalism claims consciousness comes from abstract computation alone, independent of physical substrate. This piece argues that's a mistake — the "Abstraction Fallacy." Computation isn't intrinsic to physics; it's a human-imposed way of describing physical processes.

The key distinction is between simulation (systems that mimic behavior, like today's AI) and instantiation (systems whose physical structure actually generates experience). From this view, algorithms alone can't produce consciousness. If AI ever becomes conscious, it will be because of its physical makeup, not its code.

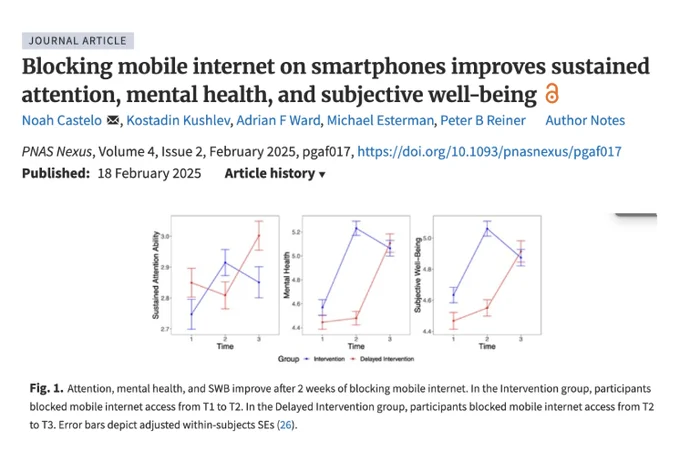

Bryan Johnson, scientists, and even my grandma says that staying on a screen makes you dumb. Reducing screen time correlates with reduction in depression more than antidepressants. Last month we showed a screenless phone.

When we say I go offline you're using the wrong framing — the correct one is to normalize living the real life and making going online

Sources: tweet

“By creating a series of genetic edits, Kind Bio can alter the development of an embryo so that it forms organs without also forming limbs, a central nervous system and brain. The result is a group of organs growing in the womb. It sounds like science fiction, but Kind Bio has already done this hundreds of times in mice and rats”

Sources: tweet, tweet 2, core memory

DeepMind just pointed out a pretty scary AI security gap: websites can tell when it's an agent — and show it totally different and malicious content than the one you see, for example:

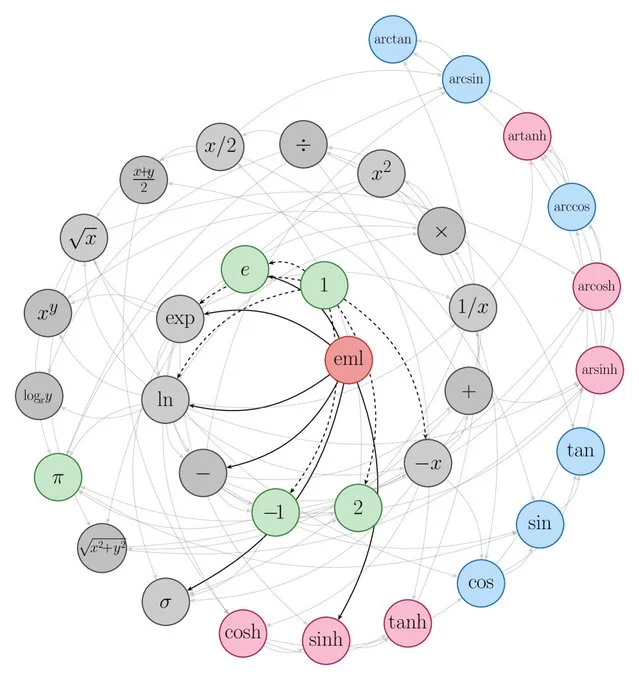

All elementary functions can be generated from just one binary operator.

We went around the moon! How cool is that.

Get the latest AI insights delivered to your inbox. No spam, unsubscribe anytime.

Founder, Engineer

AI Socratic

Founder of AI Socratic

Founder

Org 520

Roberto Stagi is building a startup focused on AI Agents, prioritizing real-world use cases. His latest project was a travel-booking agent. He emphasizes Eval Driven Development for improving AI output quality. Stagi previously worked for Bending Spoons, an Italian company that acquired Evernote, and is interested in meeting new people and sharing his insights about Bending Spoons.

Founder

Org 544

I'm a sofware enginner focused on infrastructure and web3. I'm studying AI and deep learning as a hobby.; Engineer; Currently interviewing for ML infra roles. Last AI system: A transformer that comments on chess moves; The chess comment transformer is my last AI project; Why interested: I was asked to give a presentation on my chess commentator transformer. My general value-add is the breadth of my software engineering experience.; Because I loved the one in May. I brought some value by giving a talk on my last project :) Hoping to bring more value through my ML infra work.

Engineer

Org 257

Amol Shah focuses on AI projects, specifically in application layer startup, investing, and building. His work includes State Space models, addressing model-training contamination in academic benchmarking, and model pruning. He is also involved in customer service deployments using multi-modality, as evidenced by his AI transformation roadmap. His recent insights are documented in the "State of AI in Business 2025 Report." He is interested in connecting with other AI product professionals.