Search across updates, events, members, and blog posts

This year, the focus was on physical AI, and in my experience something on the line of: 30% Data Centers, 30% GPUs and Hardware, 30% Robotics, 10% Software.

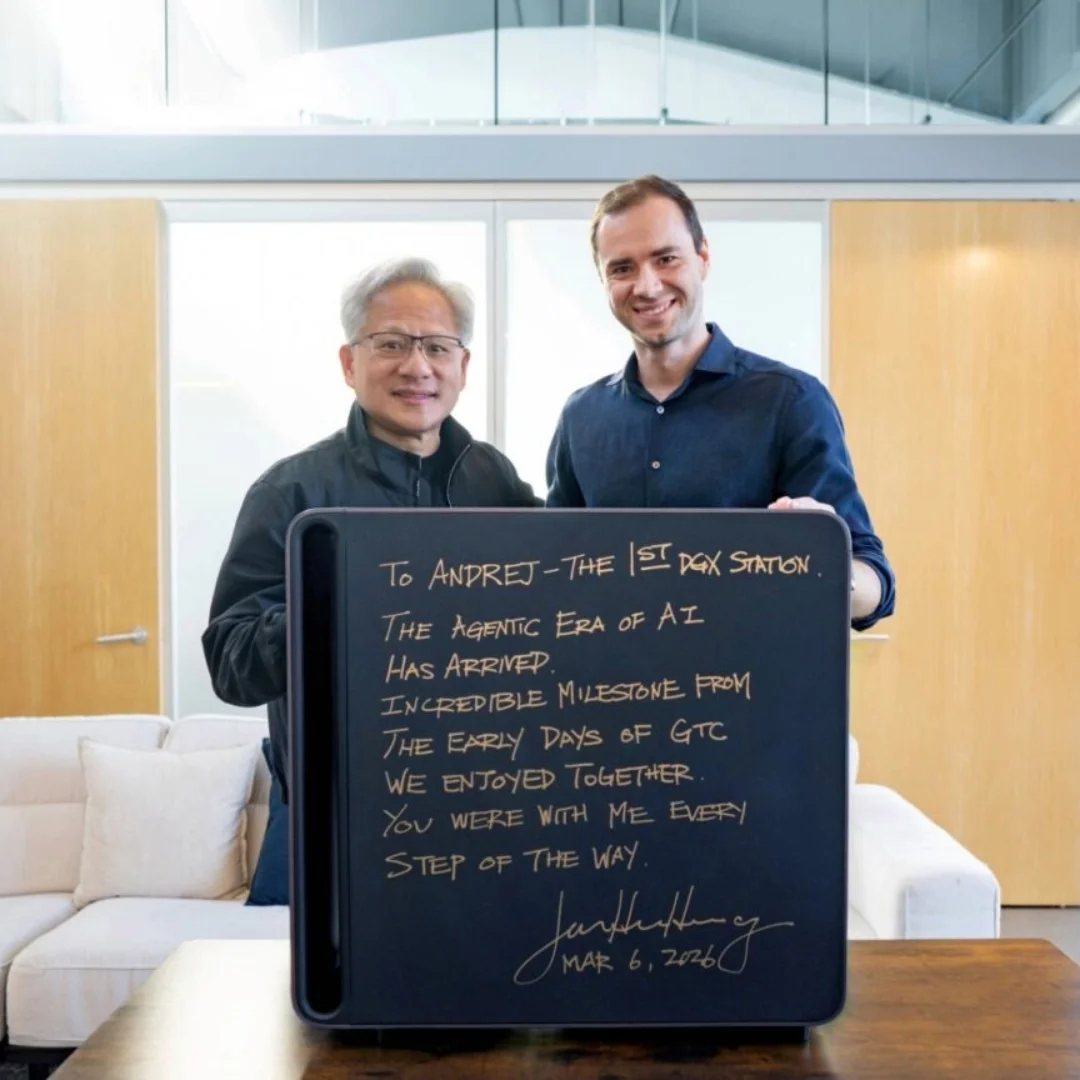

As you may have notice Karpathy has been shipping no stop lately, Nanochat, AutoResearch, AgentHub (github for agents). So Jensen gifted him a new machine.

Sources: tweet

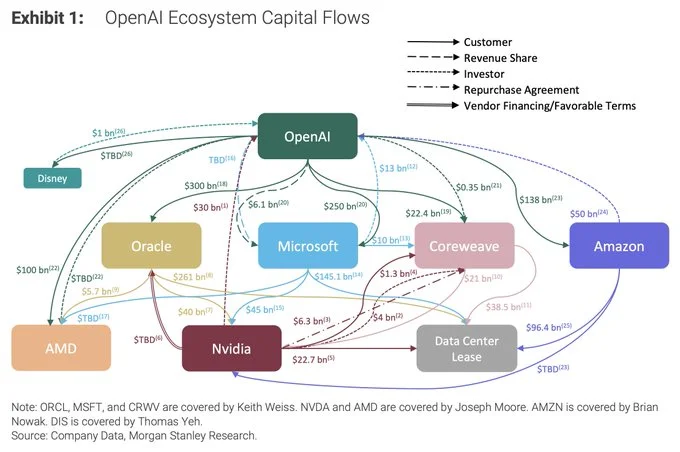

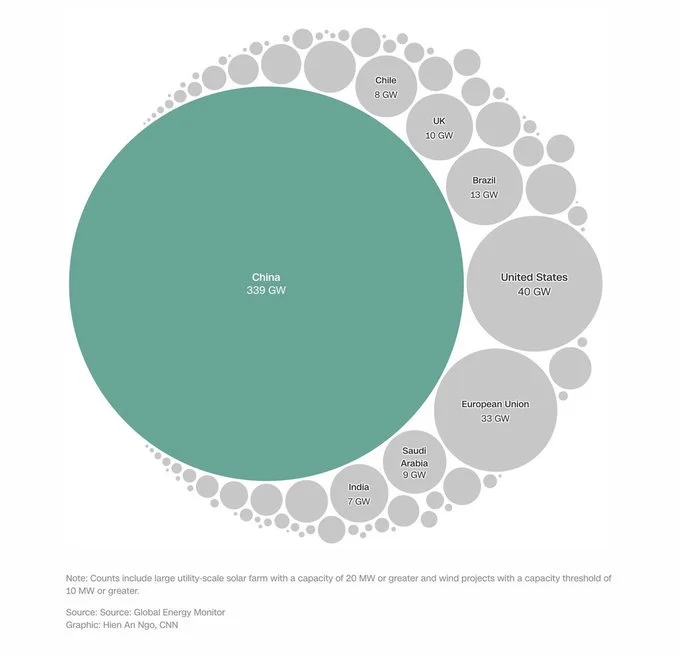

This is a hell of a graphic from Morgan Stanley

Anthropic just shipped a tons of new features for Claude Code, it's clear is becoming an OpenClaw competitor:

claude --dangerously-skip-permissions, I do! --auto-mode replaces that by having subagents checking before confirming changes./compact the context.

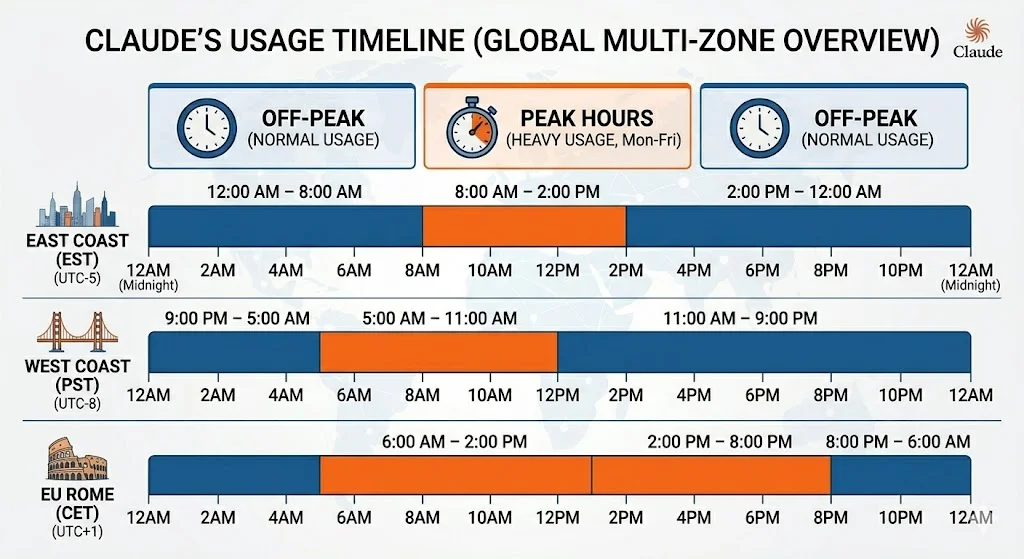

"To manage growing demand for Claude we're adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you'll move through your 5-hour session limits faster than before."

Sources: tweet

A federal judge ruled that the Pentagon designated Anthropic as a supply chain risk as retaliation for the company publicly criticizing the Pentagon's position, calling it classic illegal First Amendment retaliation. This is a significant legal precedent for AI companies' right to publicly engage in policy debates.

Sources: tweet.

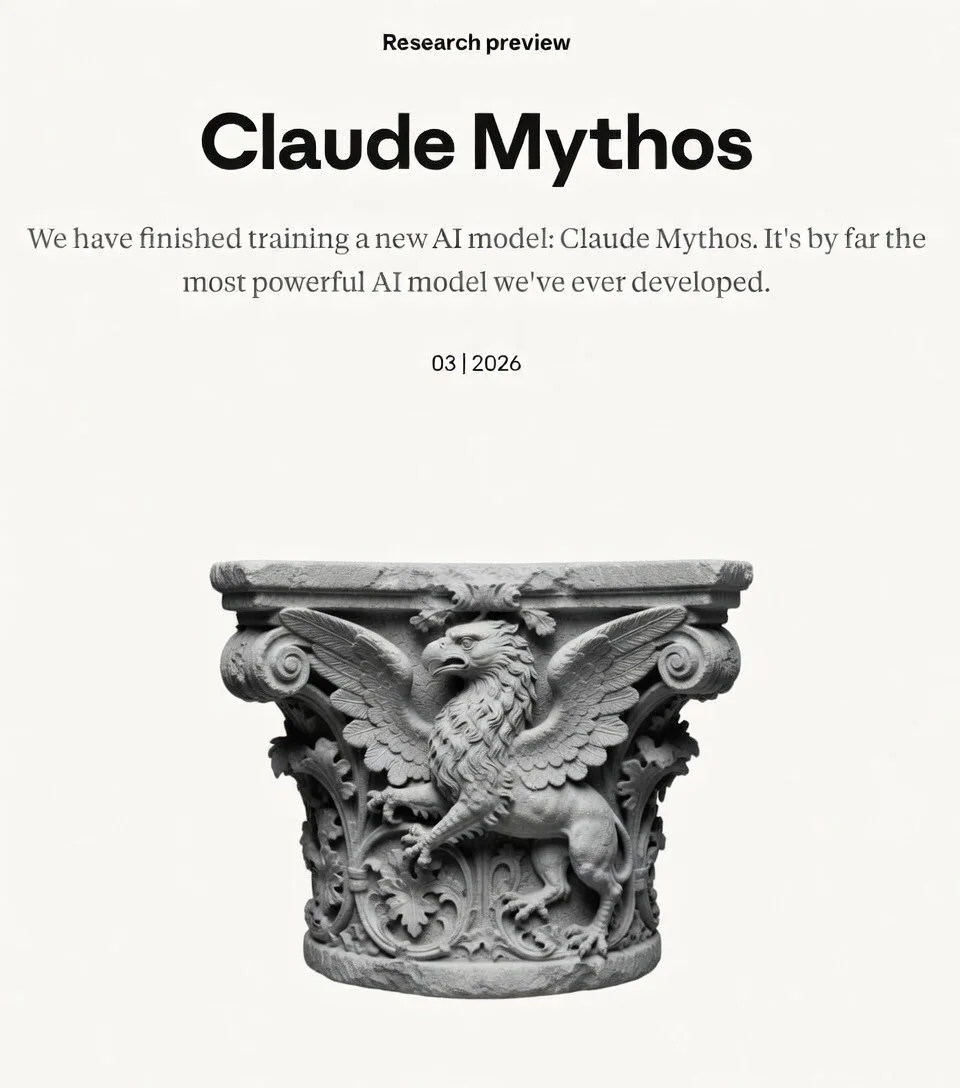

Anthropic accidentally exposed internal assets due to a CMS misconfiguration, revealing development of Claude Mythos and Capybara models. Cybersecurity stock crashes right after.

Google releases TurboQuant, a compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup with zero accuracy loss. The technique combines online vector quantization ideas from PolarQuant and earlier work. Community members have already implemented it for vLLM, fitting 4M+ KV-cache tokens on small devices, calling it the biggest open inference breakthrough of 2026.

Sources: google blog, tweet, Simple Explainer

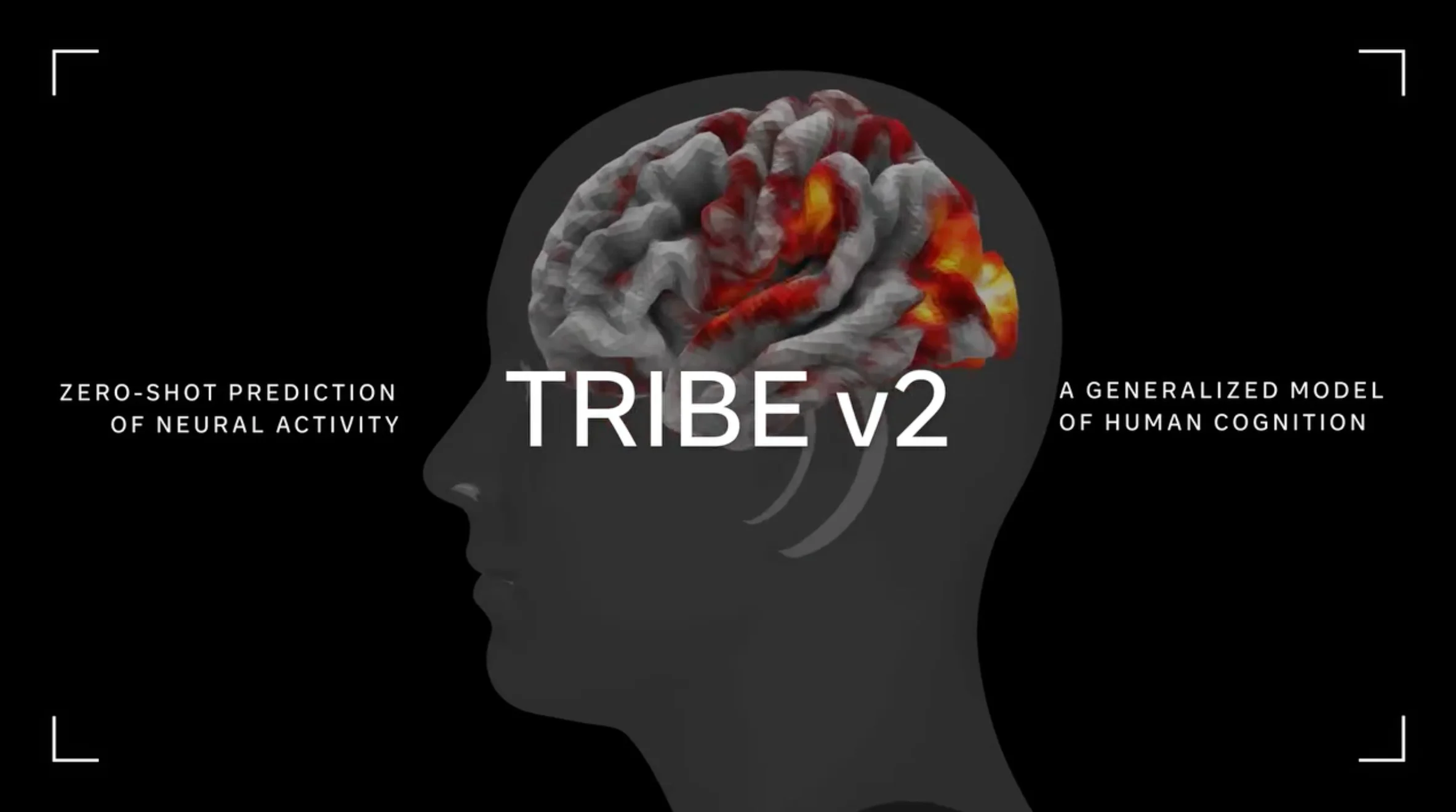

Meta FAIR introduces TRIBE v2 (Trimodal Brain Encoder), a foundation model trained on 500+ hours of fMRI recordings from 700+ people to predict how the human brain responds to sights and sounds. The paper suggests a paradigm shift in neuroscience toward unified predictive foundation models of brain and cognitive functions, achieving 70x higher resolution than previous approaches.

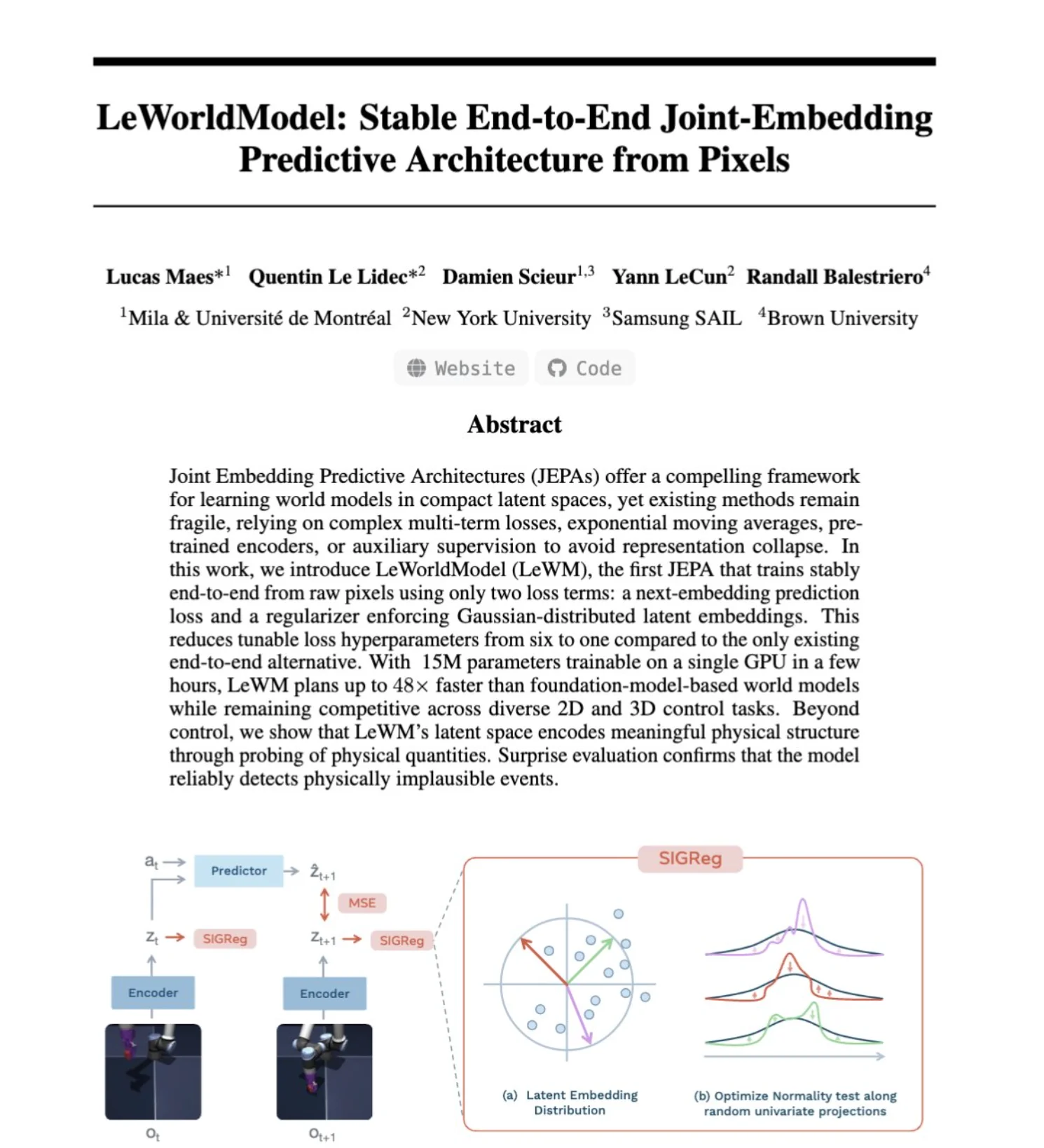

LeCun's team releases LeWorldModel, solving a key bottleneck of Joint-Embedding Predictive Architectures (JEPA) by making them trainable end-to-end from pixels. This advances the world model paradigm that many see as a critical shift beyond autoregressive language models.

Sources: tweet

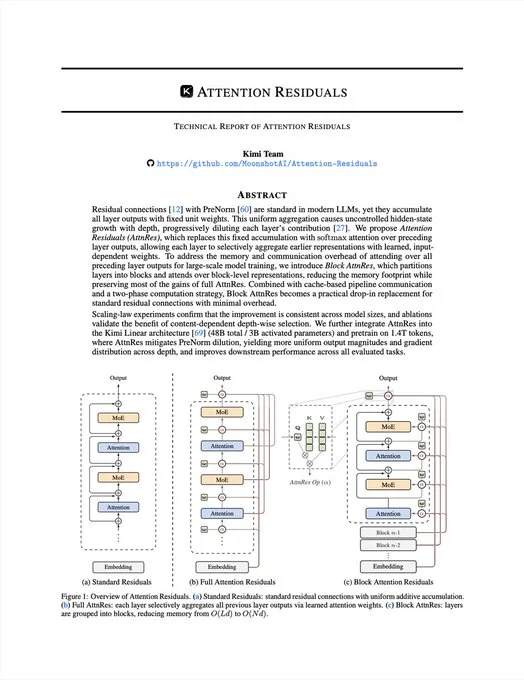

A more efficient way to reuse past information across layers without slowing models down.

Sources: tweet

TinyLoRA: Fine-Tuning 8B Parameter Models by Tweaking Just 13 Parameters, Researchers from Meta, Cornell, and CMU introduce TinyLoRA, scaling LoRA down to as few as 1 parameter. They turned an 8B parameter model into a math and reasoning powerhouse by fine-tuning just 13 parameters (26 bytes), demonstrating extreme parameter efficiency for model adaptation.

Exclusive Self-Attention (XSA): Two-Line Change Improving Transformers Already Adopted in Practice, Exclusive Self-Attention (XSA) proposes a tiny two-line code change that stops attention from attending to itself, forcing focus on the rest of the sequence. It has already become a standard component in leading solutions for OpenAI's parameter golf challenge, demonstrating rapid real-world adoption.

Anthropic Economic Index: How Claude Usage Evolves with Experience, Anthropic's Economic Index reveals that longer-term Claude users iterate more carefully, are less likely to hand over full autonomy, attempt higher-value tasks, and receive more successful responses. This provides empirical insight into how human-AI collaboration patterns mature over time.

GradMem: Writing Context into LLM Memory via Test-Time Gradient Descent, GradMem introduces writing context into memory using test-time gradient descent rather than forward-pass encoding. By optimizing memory tokens with a reconstruction loss, a frozen model can compress long contexts into small memory without the lossy limitations of existing approaches.

100M Token Context Without Collapse: <9% Degradation on 2×A800 GPUs, New research achieves 100M token context windows with less than 9% degradation from 16K, beating RAG + rerank + SOTA pipelines while running on just 2×A800 GPUs. This could fundamentally change how long-context applications are built.

LLM Internals: By Layer 10, Models Don't Know What Language They're Reading, A new blog post reveals that when feeding the same sentence in English and Chinese to an LLM, by layer 10 the model's internal representations become language-agnostic — it's "just thinking." This provides fascinating insight into how LLMs develop universal conceptual representations.

LLM Fused with Mini Computer: Switching Between Text and Machine Code in Single GPU, A developer demonstrates an LLM brain fused with a mini computer that can switch between generating text and generating/executing machine code, all running in a single GPU and torch graph. This represents a step toward unified compute-and-language models.

Columbia University Exposes Flaws in Private AI Inference: Prior Methods Used 280GB per Query, Columbia University researchers prove that the entire private AI inference industry built the wrong approach, with prior methods requiring 280GB per query and 60-second latency for full transformer encryption. Their work points to fundamentally more efficient architectures for privacy-preserving inference.

A system of the agents by the agents for the agents. But the agents are ret...

LiteLLM's PyPI release 1.82.8 was compromised in a major supply chain attack. A simple pip install litellm could exfiltrate SSH keys, AWS/GCP/Azure credentials, Kubernetes configs, API keys, crypto wallets, and more. The package was audited by Delve, a firm criticized for rubber-stamping security audits, highlighting systemic risks in the AI tooling supply chain.

Sources: tweet

This is so far the only unsaturated agentic intelligence benchmark. Unlike benchmarks that test what models already know, ARC-AGI-3 tests how they learn and acquire new skills, providing a formal measure of the gap between human and AI skill acquisition efficiency.

Sources: tweet

Team meeting in 2026

Gemini Embedding 2 is our first natively multimodal embedding model that maps text, images, video, audio and documents into a single embedding space, enabling multimodal retrieval and classification across different types of media — and it’s available now in public preview. Sources: tweet

Get the latest AI insights delivered to your inbox. No spam, unsubscribe anytime.

Founder, Engineer

AI Socratic

Founder of AI Socratic